Picture this: a shopper opens ChatGPT and types “find me a waterproof carry-on under $200 that ships in two days.” The agent queries multiple merchant catalogs simultaneously, filters against every stated constraint, and returns three options.

The brands that didn’t make the list were not eliminated because of bad products or weak brand equity. They were eliminated because their product data was incomplete, their inventory status was stale, or their catalog had no structured attributes the agent could parse.

Agentic commerce is already here, and most retailers are not ready for it. McKinsey projects that $900B–$1T in US commerce will flow through AI agents by 2030. Even conservative estimates represent a channel that will dwarf most retailers’ current investment in SEO or paid social.

The challenge is that optimizing for AI shopping agents requires a different approach. Twenty years of ecommerce best practice — keyword-rich page copy, lifestyle photography, storytelling PDPs — does almost nothing for agent discoverability. Agents do not browse. They parse. And if your data is not machine-consumable, your products are invisible.

Optimizing for AI shopping agents runs across five layers practitioners can act on now: machine-readable catalog data, pricing accuracy and inventory freshness, agent-accessible APIs and protocols, first-party behavioral signals, and measurement infrastructure. The brands that get this right early will build a compounding advantage latecomers will struggle to close.

What AI Shopping Agents Actually Evaluate (and What They Ignore)

Understanding how to optimize for AI shopping agents starts with understanding how they make decisions. Agents operating on behalf of shoppers – whether through AI shopping agents like Perplexity Shopping, Amazon Alexa Plus, or ChatGPT’s shopping mode – do not “visit” your website the way a human does. They query structured data sources: product feeds, APIs, schema markup, and indexed catalog data. They match against explicit shopper constraints and implicit preference signals derived from the user’s history.

This is the core conceptual shift. Traditional SEO optimization assumes a human reader who can be persuaded by narrative, impressed by imagery, and nudged by social proof on the page. Agent optimization assumes a machine evaluator that is checking fields, validating values, and scoring fit against a constraint set.

The five signals agents use most consistently to evaluate and select products are:

- Attribute completeness: Does the product record contain all relevant specifications? A marine motor without horsepower listed, a carry-on without dimensions, or a paint set without listed colors simply cannot match a constraint-based query.

- Pricing accuracy and freshness: Is the price current and consistent across data sources? Stale or inconsistent pricing is a trust signal failure.

- Inventory status: Is the product actually available? Agents operating on “ships within 2 days” constraints require live inventory data, not yesterday’s snapshot.

- Trust signals: Reviews, ratings, seller reputation, return policy, and shipping time estimates. These are decision factors when two products match equally on specs.

- Contextual fit: Does the product satisfy the full constraint set, including secondary requirements like material, compatibility, or weight?

What agents discount or ignore entirely: promotional copy, brand storytelling, lifestyle photography (except in vision-capable agents), and generic category descriptions written for keyword density. A product page that ranks well in Google because of a thoughtfully written 800-word description may be completely invisible to an agent if the structured data underneath is sparse.

A growing body of practitioners refers to this discipline as “agent experience optimization” or AEO – the practice of structuring product data so that AI systems can reliably parse, evaluate, and recommend your products. It is not a replacement for traditional SEO. It is an additional layer that operates at the infrastructure level rather than the content level.

Platforms designed for AI-powered product discovery are built to analyze product attributes and categories in the way agents do — which makes them useful both for on-site search and for agent-facing catalog readiness.

Understanding the types of AI shopping agents in the market helps clarify which data signals matter most for each platform. Different agents weigh different signals based on their design, but attribute completeness and pricing freshness are universal requirements.

Layer 1: Make Your Product Catalog Readable By Machines

This is the highest-leverage optimization layer, and the one where most retailers have the largest gaps. Catalog problems are often invisible to human visitors — a product page can look great and still be invisible to an agent.

Structured Data and Schema Markup

Every product page needs a valid product schema (JSON-LD is the preferred format) with the following fields populated: name, description, SKU, brand, offers (containing price, priceCurrency, availability, and url), aggregateRating, and image. If any of these are missing, agents parsing your structured data have incomplete information and will frequently skip the product in favor of a more complete alternative.

Google’s product structured data documentation and Schema.org specifications establish these as core fields for product markup, and they map directly to the constraint sets agents evaluate against. A product with complete metadata but thin page copy will consistently outperform a beautifully written PDP with incomplete schema.

Attribute Completeness at the SKU Level

This is where most catalog audits uncover the most revenue-at-risk SKUs. Agents filter on attributes. A product without the relevant spec for its category cannot match an attribute-based query, no matter how well it matches the category intent.

Practical audit approach: identify your top 20% of revenue-producing SKUs and verify every category-relevant attribute is populated with a specific value, not generic copy. A marine product category might require horsepower, engine type, shaft length, fuel type, and weight. If you have 7 of 12 expected attributes filled, you are invisible to any query that filters on the missing five.

Natural Language Alignment in Descriptions

Agents do parse product description fields, but not for marketing persuasion. They parse for factual, attribute-rich content. The practical rewrite principle: front-load specifications.

“Waterproof Gore-Tex jacket, 3-layer construction, weight 480g, packable to fist size, available in sizes XS–3XL, compatible with standard pack covers” outperforms “Experience the ultimate in outdoor adventure with our premium performance jacket” from an agent evaluation standpoint. The first version contains parseable facts. The second contains no useful structured signal.

Category Taxonomy Clarity

Agents navigate catalogs via taxonomy. Ambiguous categories — “accessories” containing both jewelry and phone cases, or “outdoor” spanning both furniture and apparel — create mismatch between query intent and returned results. Taxonomy should be specific, hierarchical, and consistently applied across every SKU in that category. Inconsistent taxonomy is one of the most common reasons AI search returns mixed-intent results.

How Hobbycraft Solved the Catalog Structure Problem

Hobbycraft, the UK’s leading arts and crafts retailer with 27,000+ SKUs, had a concrete version of this challenge.

Broad searches like “paint” were surfacing unbalanced mixes — children’s kits next to professional artist supplies — without any intent distinction. By implementing Loomi AI’s natural language understanding with conditional slot merchandising, Hobbycraft gave its catalog the intent-aligned structure that both shoppers and AI systems could navigate. The outcome: a +21% increase in AOV in the “paint” category and a +7.3% increase in revenue per visit.

The lesson for agent optimization is direct: catalog structure that confuses an AI search system will also confuse a shopping agent. Clean, intent-aligned taxonomy and attribute-complete SKUs are the foundation.

For AI merchandising teams, the catalog audit is also where product discovery improvements generate the most downstream lift – both in on-site search and in external agent discoverability.

Layer 2: Signal Trustworthy Pricing and Real-Time Inventory

Pricing freshness and inventory accuracy are the pass/fail filters most retailers have not yet operationalized for agents. Unlike SEO, where an outdated meta description is a ranking inefficiency, a stale price or inaccurate stock status in an agent context is a trust signal failure with consequences that compound over time.

Why Pricing Freshness Matters More Than Price Competitiveness

Shopping agents compare prices and validate price consistency across your feed, your PDP, and your checkout flow. When those three numbers differ — even by a few dollars from a promotional overlay that did not propagate to the feed — agents detect the discrepancy as a reliability signal. Agents that learn which merchants have consistent pricing will deprioritize those that do not, because recommending a product that prices differently at checkout damages the agent’s own trust with its user.

This means price parity across channels is an agent optimization requirement and a user experience requirement in equal measure. A practical audit: spot-check 50 SKUs weekly for price parity between your product feed and the live PDP. For high-velocity categories running frequent promotions, increase that frequency.

Real-Time Inventory Signals

Agents operating on shopper constraints like “in stock now” or “ships within 2 days” require inventory data that is accurate at the moment of query. Static inventory snapshots updated once daily are not sufficient for this requirement. Merchants should expose inventory availability through Availability schema (InStock, OutOfStock, PreOrder) and, where possible, through product APIs that return live stock data rather than cached values.

This directly affects checkout conversion as well. An agent that selects a product showing as in-stock, only for the shopper to discover it is unavailable at checkout, creates a broken experience that reflects on the agent and the merchant simultaneously.

Feed Freshness Standards

Product feeds submitted to Google Merchant Center, Microsoft Shopping, and any agent-accessible catalog endpoint should refresh at minimum daily. For fashion and consumer electronics, where price and availability change frequently, near-real-time feed updates are increasingly expected. Every product disapproved from a feed is invisible to agents querying that data source. Feed disapproval rate is a direct proxy for catalog reachability and should be tracked as a maintenance metric alongside coverage and attribute completeness.

Trust Signals Agents Can Parse

Beyond price and inventory, agents weigh secondary trust signals when two products are otherwise equivalent. These include: review count and average rating (exposed through aggregateRating schema), return policy (use MerchantReturnPolicy schema), and shipping time estimates (use ShippingDeliveryTime schema). Merchants who have not implemented these schema types are effectively hiding their trust signals from automated evaluation.

Layer 3: Open Your APIs to Agent Protocols

This is the layer where early investment creates the most durable advantage. As AI shopping agents mature, merchants who expose clean, documented, agent-accessible APIs will be discoverable by a wider range of agent platforms. Those who do not will depend entirely on agents being able to scrape or infer their catalog data, a much less reliable path.

How Agents Access Merchant Data

Agents do not only scrape PDPs. They query product APIs, consume structured feeds, and increasingly use standardized agent protocols to interact with merchant systems directly. The technical surface area that matters for agent accessibility is broader than most retailers currently optimize: it includes your product feed, your catalog API, your schema implementation, and increasingly, purpose-built agent communication protocols.

llms.txt: Low-Lift, High-Signal

An llms.txt file is a simple text file placed at your root domain (analogous to robots.txt, but for language models) that tells AI systems which pages and data sources are authoritative for your brand. A well-structured llms.txt links AI systems to your product catalog, return policy, shipping information, and contact data — giving agents a clear path to structured, reliable information rather than making them infer it from page content.

Implementation is a low lift. The file format is plain text. But its signal is meaningful: it tells agents that you have deliberately structured your information for machine consumption, which is a proxy for data reliability.

Model Context Protocol (MCP)

Anthropic’s Model Context Protocol (MCP) is gaining adoption as a standard for agent-to-merchant data exchange.

Merchants who expose an MCP-compatible endpoint allow agents — including Claude and any systems built on the MCP standard — to directly query product catalog data, check inventory, and in some implementations, initiate transactions. The early adoption advantage is significant: agents default to sources they can reliably query through established protocols. Being MCP-accessible before your competitors is a discoverability moat.

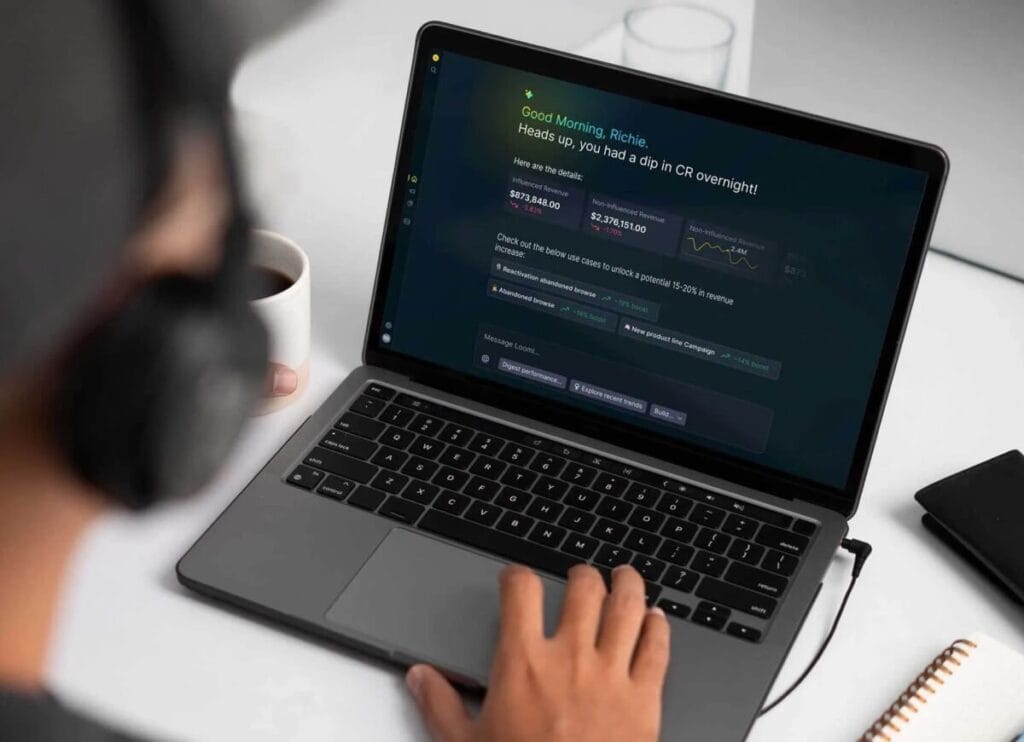

Bloomreach’s Loomi Connect puts your products directly into AI shopping experiences like ChatGPT with full ranking, personalization, and behavioral intelligence. Interaction data flows back into Loomi AI, making every channel smarter over time.

API Architecture for Agent Accessibility

Merchants running headless or composable commerce architectures are natively better positioned here, their product data is already API-accessible by design. For others, the priority is exposing a clean product catalog API endpoint that returns, at minimum: SKU, name, description, attributes, price, availability, images, and category path. If an agent cannot programmatically retrieve those fields, it is relying on less reliable inference methods.

For agentic personalization to function, the same API accessibility that makes your catalog queryable by external agents also enables internal personalization systems to operate in real time.

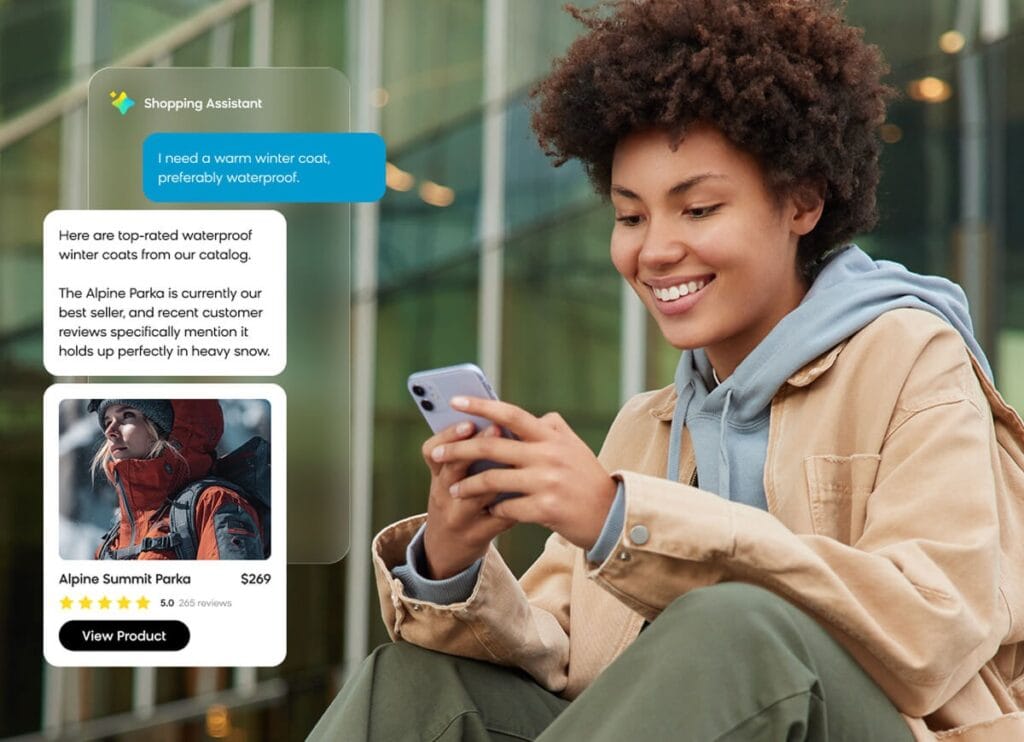

How Defender Learned the Catalog-API Connection

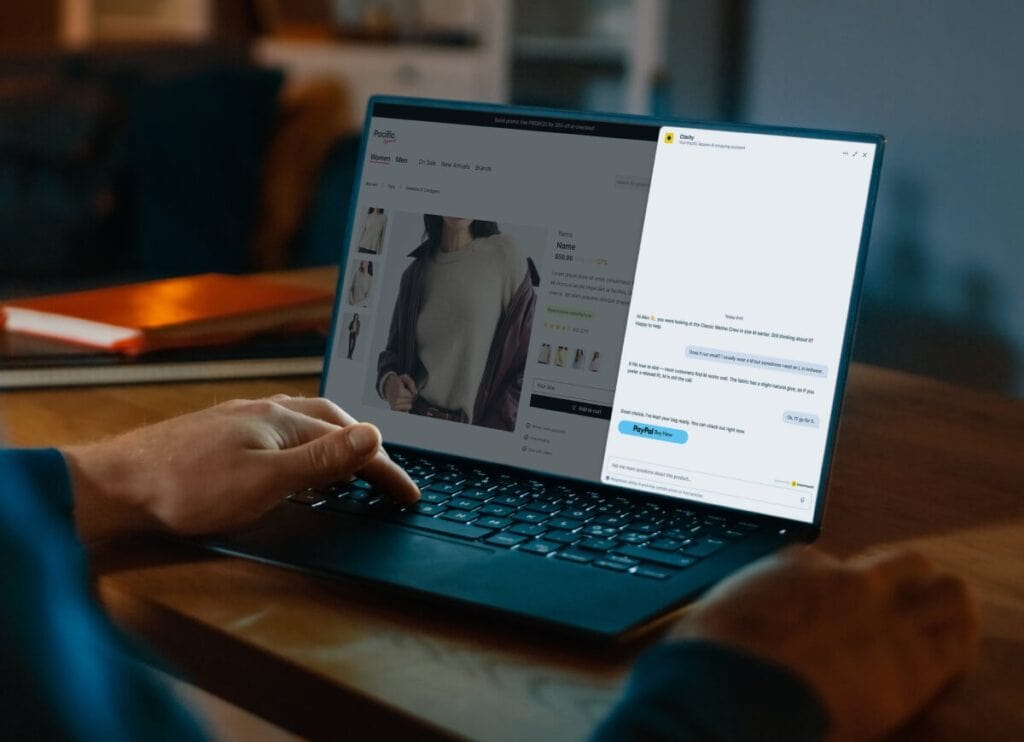

Defender, a marine retailer with products requiring significant purchase expertise, deployed Loomi Conversational Agent on product detail pages and category quizzes. The results included a nearly 3% increase in add-to-cart rate and over 3% increase in mobile add-to-cart rate. But the more instructive outcome for agent optimization was what the deployment revealed about catalog structure: Defender gained “insights into more effective site structure” and developed “a better understanding of how to realign its catalog to match how customers search and what they’re looking for.”

The conversational agent layer is, in structural terms, also an API layer. It makes product knowledge queryable. And the catalog restructuring insights it generates are exactly the kind of signals that improve agent discoverability. Conversational shopping infrastructure serves both human shoppers and the agent evaluation layer simultaneously, and AI-powered personal shopping capabilities built on that infrastructure scale across both channels.

Layer 4: Build the First-Party Data Signal That Agents Trust

First-party behavioral data is where the agentic commerce competitive moat gets deepest and most durable. Agents operating on behalf of individual consumers will increasingly be fed that consumer’s preference profile. The merchants who have already built consent-based behavioral data infrastructure are positioned to receive personalized queries that go beyond “find me a waterproof jacket” toward “find me a waterproof jacket for someone who has bought technical outdoor gear at this price point before and prefers sustainable materials.”

That kind of match is only possible when two things are true: the agent has the shopper’s preference data, and the merchant has the product data and behavioral signal that enables a meaningful match. Brands with siloed, fragmented, or stale data will be outcompeted by brands whose customer data is unified, current, and accessible.

What First-Party Signals Matter Most

The behavioral signals most useful for agent-level personalization are: purchase history (what category, brand, price point, and specifications did this customer buy before?), browse and search behavior (what did they consider but not buy?), preference attributes (size, style, brand affinity, material preferences), and recency signals (when did they last engage and what were they looking at?). These signals do not live in your product catalog. They live in your customer data infrastructure, and that infrastructure needs to be unified before it becomes useful.

Data Unification as the Prerequisite

First-party signals are only valuable if they feed a single, unified customer profile. Brands running an email platform disconnected from on-site behavior, disconnected from purchase history, have three partial pictures rather than one actionable one. Agents querying for personalized product matching need a coherent signal, not fragmented data from three systems that have never been reconciled.

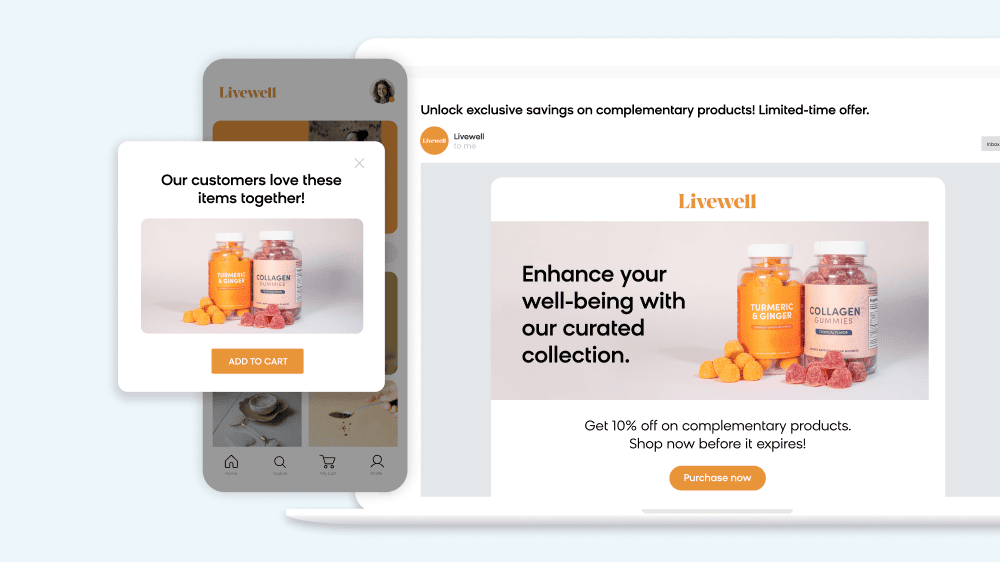

MandM: Unification Enabling Personalization at Scale

MandM Direct operates seven localized websites, ships to 25+ countries, and serves over three million active customers. Before data unification, the brand was operating disconnected channels with siloed behavioral data. By unifying customer data through Loomi AI, MandM built real-time personalization infrastructure that produced measurable outcomes: +5% conversion rates from personalized product-filter buttons, +2.6% revenue per visit from personalized pop-ups, +83.7% click rate on personalized on-site banners, and +66% uplift in purchase conversion when banners reflected individual behavior.

The data unification that makes this personalization work is exactly the same infrastructure that makes agent-level personalization possible. When agents query for preference-based recommendations, a unified customer profile is the answer. Fragmented data returns noise.

Bluebell Group: Real-Time Reconciliation Across Online and Offline

Bluebell Group, Asia’s leading distributor for over 160 brand partners across 10+ Asian markets, faced disconnected customer and product data with no real-time view across their partner brands. After implementing Bloomreach with Shopify, they achieved real-time data unification, including reconciling offline purchases with online journeys to suppress redundant campaigns. The outcomes: 2% of total ecommerce sales from abandoned cart automation, 5.5% of total ecommerce revenue from gifting campaigns, and 12–15% of total ecommerce sales from EDMs.

The underlying lesson for agent optimization: when your customer data is unified in real time, every system that touches that data, including external AI agents, can operate on accurate signals.

Consent and Data Governance

First-party data collection requires explicit customer consent, and compliance is both a legal requirement and an agent trust signal. Emerging consent-verification frameworks are building compliant, first-party data exchange into the agent commerce infrastructure, making data governance a discoverability factor as much as a legal one. Brands whose data collection is transparent, documented, and compliant are positioned for that future. Brands with unclear consent chains are not.

Self-optimizing agents and data-driven optimization systems both depend on the same foundation: clean, consented, unified first-party data that gets richer over time as customer relationships deepen.

Layer 5: Measure What AI Agents Actually Respond To

Traditional ecommerce analytics do not tell you how your products are performing within AI agent selection pools. This measurement gap is one of the most significant reasons retailers have no visibility into agent optimization performance. You cannot improve what you cannot see.

Four Metrics to Track for Agent Optimization

1. Agent referral traffic. In GA4, create a segment filtering sessions where session_source contains known agent platforms: perplexity.ai, chatgpt.com, copilot.microsoft.com, and others as they emerge. Track which products these sessions land on, what the add-to-cart rate is, and whether they convert to purchase. As agent platforms become more prominent referral sources, segmenting by source domain is becoming standard practice in ecommerce analytics.

2. Structured data coverage rate. What percentage of your catalog has a complete product schema with all required fields populated? Audit this monthly. The target for your top-revenue SKUs is 100% coverage. Every SKU missing required fields is a product that agents cannot reliably evaluate.

3. Feed acceptance rate. In Google Merchant Center and any channel feed endpoint, track the disapproval rate. Products disapproved from feeds are invisible to agents querying those data sources. A target of under 1% disapproval on active SKUs is achievable with proper feed management and should be treated as a maintenance metric.

4. Catalog attribute completeness score. For your top 1,000 SKUs, score what percentage have all category-relevant attributes populated. A marine product with 12 expected category attributes should score 12/12, not 7/12. Benchmark this quarterly and set improvement targets for your merchandising team.

Optimization Cadence

A simple, sustainable framework:

- Weekly: Spot-check price/inventory parity on top 50 SKUs. Review feed disapproval rate in Google Merchant Center.

- Monthly: Structured data coverage audit across top-revenue SKUs. Review agent referral traffic segment in GA4.

- Quarterly: Full catalog attribute completeness review across top 1,000 SKUs. Analyze which product categories are generating agent referral traffic and which are not.

Your AI Shopping Agent Optimization Checklist

Everything above distills into five layers of action. Use this as your working audit document.

Layer 1: Catalog (Machine-Readable)

- Deploy product schema (JSON-LD) on all PDPs with all required fields populated: name, description, sku, brand, offers, aggregateRating, image

- Audit top 20% revenue SKUs for complete attribute coverage — no empty specification fields for category-relevant attributes

- Re-write product descriptions to front-load specifications before marketing language

- Verify category taxonomy is specific, hierarchical, and consistently applied across all SKUs in each category

Layer 2: Pricing and Inventory

- Set product feed refresh frequency to daily minimum; move to near-real-time for high-velocity categories

- Implement Availability schema with live inventory status (InStock, OutOfStock, PreOrder)

- Audit 50 SKUs weekly for price parity between feed data and live PDP

- Add MerchantReturnPolicy and ShippingDeliveryTime schema to all PDPs

Layer 3: API Access

- Create and publish llms.txt at your root domain linking to authoritative product data sources, return policy, and shipping information

- Audit your product catalog API: confirm it returns SKU, name, attributes, price, availability, images, and category path

- Evaluate MCP compatibility if your platform supports it; prioritize implementation if you are in a high-consideration product category

Layer 4: First-Party Data

- Unify customer data across email platform, on-site behavior tracking, and purchase history into a single customer profile

- Verify consent collection is compliant with applicable regulations (GDPR, CCPA) and that consent records are documented

- Identify your top behavioral signals (purchase history, browse patterns, category affinity) and confirm they are captured, unified, and accessible to personalization systems

Layer 5: Measurement

- Create GA4 agent referral traffic segment (filter session_source by perplexity.ai, chatgpt.com, copilot.microsoft.com)

- Schedule monthly structured data coverage audit (target: 100% of top-revenue SKUs with all required schema fields)

- Track Google Merchant Center disapproval rate weekly (target: under 1% of active SKUs)

- Implement quarterly catalog attribute completeness scoring for top 1,000 SKUs; set improvement targets by category

How Bloomreach Helps You Optimize for AI Shopping Agents

Executing these five layers at scale requires infrastructure built for AI-native commerce, not retrofitted tools. Here is how we support each layer.

Catalog layer: Loomi AI analyzes product attributes, understands category relationships, and creates the intelligent product matching foundation that both on-site search and external agents depend on.

Conversational and API layer: Loomi Conversational Agent acts as a scalable AI shopping concierge and an agent-accessible product knowledge interface. The conversational shopping infrastructure we provide makes product knowledge queryable at the API level, which matters as agent protocols mature.

First-party data layer: Loomi AI unifies customer data across channels into a single behavioral profile that personalization systems — and agents — can query reliably.

Analytics layer: The ability to surface search intent, catalog performance, and behavioral patterns through natural language requests, rather than manual query-building, is the measurement infrastructure that makes ongoing agent optimization possible — productivity gain and competitive advantage in one. You cannot improve what you cannot measure quickly.

The brands winning agentic commerce are building the infrastructure now, while the competitive gap is still open.Talk to a Bloomreach expert to see how your product data, APIs, and first-party signals stack up.

Frequently Asked Questions: Optimizing for AI Shopping Agents

What is the difference between SEO and optimizing for AI shopping agents?

Traditional SEO is designed to help human readers find and evaluate content through search engines. AI shopping agent optimization is designed to help automated systems parse, evaluate, and select products based on structured data signals. SEO focuses on page copy, keyword density, and backlinks. Agent optimization focuses on schema completeness, attribute accuracy, pricing freshness, and API accessibility. Both matter for discoverability, but they require different technical approaches.

Do I need to change my website design to be discovered by AI shopping agents?

Not necessarily. The most important changes are in your product data layer – schema markup, feed quality, attribute completeness – rather than your visual design. Agents do not evaluate design. They evaluate structured data. A site with no visual design refresh but complete product schema and live inventory signals will outperform a beautifully designed site with sparse structured data.

What is llms.txt and do I actually need it?

llms.txt is a plain text file placed at your root domain that tells language model systems which pages and data sources are authoritative for your brand. It is analogous to robots.txt but intended for AI systems rather than traditional web crawlers. Implementation is low-lift and signals that your data is deliberately structured for machine consumption. It is not mandatory today, but adoption is growing and early implementation is a low-cost way to signal reliability to agent platforms.

How do AI shopping agents use my product reviews?

Reviews and ratings exposed through aggregateRating schema are parseable trust signals that agents weigh when two products are otherwise comparable on specifications and price. A product with 4.6 stars from 1,200 reviews will typically score higher in agent trust evaluation than a product with 4.8 stars from 12 reviews, because the former provides a more statistically reliable signal. Ensuring your review data is structured in schema (and visible on the page) is what makes it accessible to agents.

What is Model Context Protocol (MCP) and should my ecommerce team care about it?

Anthropic’s Model Context Protocol is an emerging standard that allows AI agents to directly query external data sources and tools. For ecommerce, it means agents can query your product catalog, check inventory, and potentially initiate transactions through a standardized interface. Whether your platform currently supports MCP depends on your tech stack. For merchants on headless or composable architectures, MCP compatibility is closer. For others, it is worth evaluating in your 12-month technology roadmap as agent adoption grows.

How do I measure whether agent optimization is working?

Start with GA4. Create a segment filtering sessions where the session source contains known agent platforms (perplexity.ai, chatgpt.com, copilot.microsoft.com) and track what those sessions look at and whether they convert. Layer in structured data coverage audits and Google Merchant Center feed disapproval rates as proxy metrics for catalog health. Agent referral traffic is small today but growing, and building the measurement infrastructure now means you will have baseline data when the channel becomes significant.

Which product categories are most affected by AI agent shopping behavior?

High-consideration, specification-driven categories see the earliest and most pronounced agent influence: consumer electronics, outdoor and sporting goods, home appliances, auto parts, and marine equipment. These are categories where shoppers are already providing explicit constraints (compatibility, weight, power rating, size) – exactly the kind of structured query agents execute well. Fashion and beauty follow as agents become more capable at handling preference-based selection. In practice, every category with filterable attributes is exposed to agent optimization dynamics.