All of us have used a search box. Its purpose is well defined: you type something in, and you get results related to what you were looking for. If you’re on an ecommerce site, those results are products or services. Well, that’s what should happen, anyway, but not every search vendor is capable of serving up results that are relevant, personalized, and optimized for important ecommerce metrics like conversions, revenue, and add-to-cart rate.

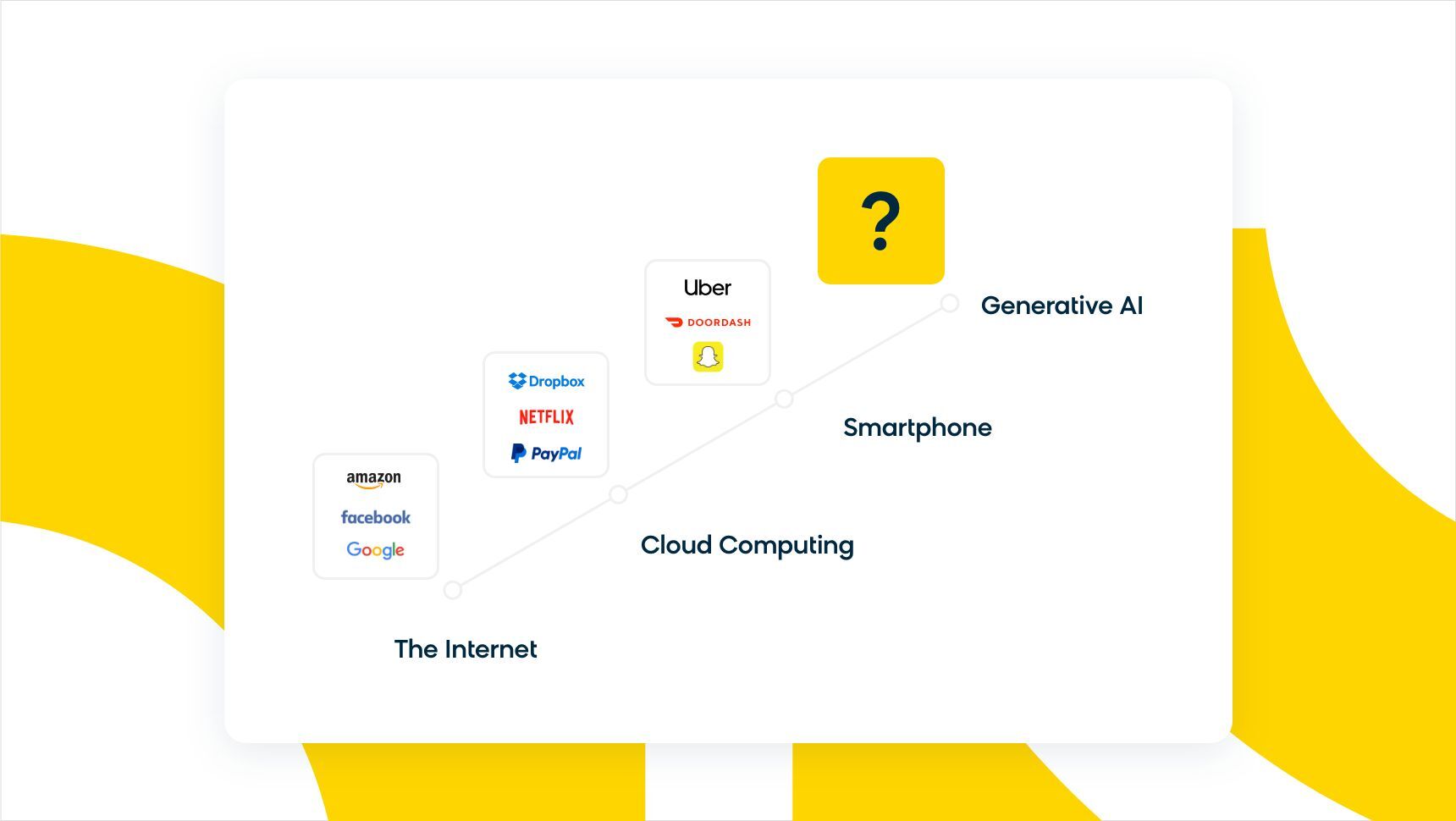

Despite the holes already in the market, an evolution is happening underneath the current environment of ecommerce site search, and it’s made a big splash in the court of public opinion over the past two years. That’s right, generative AI has entered the chat with ChatGPT and Gemini, just some of the many foundation models now available. More people are also curious about the tech behind generative AI, known as “large language models” or LLMs, which use massive amounts of data to learn, respond, and even generate human-like language for different queries. The power of LLMs has even been compared to the human brain.

However, we should understand that LLMs are a two-sided coin. On one side, there are all the possibilities for deep learning and innovation, and on the other, there is a fair amount of risk. While large language models show a lot of potential, they also have a long way to go in terms of development. Retail and ecommerce is projected to account for 25-27.5% of the global enterprise LLM market in 2026. Currently, valid questions are being raised about the ethics and potential risks associated with the global AI race. It’s especially important to remember that LLMs hold a lot of powerful (and dangerous) capabilities, like “Do Anything Now,” where people can subvert the AI’s purpose for their own amusement or gain.

All in all, it isn’t a perfect technology. Although pretrained, LLMs require ongoing refinements and adjustments to ensure consistent accuracy and relevance. Even then, a big question on the minds of commerce professionals remains: Where do LLMs fit into a shopping scenario? Is it possible now?

Since artificial intelligence has never been (and will never be) a finite line drawn in the sand, this question is more complex and multi-faceted than it seems.

AI Should Already Be Embedded in Ecommerce Site Search

AI in ecommerce search today drives revenue by using a combination of recall and ranking algorithms and training data to deliver the most relevant results that match your shopper’s intent. This happens through a combination of a transformer model, like natural language processing (NLP) and machine learning (ML). NLP breaks down the context behind shoppers’ queries, and ML learns about your business by analyzing these behaviors. This self-learning AI becomes smarter daily and can rapidly adapt to your customers’ ever-changing needs as you continue to scale.

However, your business will need to see your customers as more than just “customers” to succeed in the current competitive space. The winners in ecommerce find the “seekers.” They look beyond a person’s singular identity as “the consumer” to uncover their true motivation behind wanting a certain product or service, then build digital shopping experiences specific to them. This is where AI and machine learning models can help tremendously, whether it’s crunching product and customer data to deliver insights and optimization suggestions or offering recommendations based on similar products.

MIT Sloan research found that LLMs outperform traditional search at identifying customer needs when buyers can’t precisely articulate what they want, which is exactly the seeker problem this article is about.

As we look toward the future, we must recognize that the potential for LLMs to revolutionize ecommerce search lies in their ability to provide shoppers with hyper-personalized experiences without the typical prompting required of a traditional search bar.

Why Should Ecommerce Care About LLMs and Generative AI?

As we all probably know, ChatGPT took the conversation about generative AI to the mainstream and provided an environment where anyone could interact with it. Not only has it caused a lot of discussion around the current uses of AI within the ecommerce industry, but it also has a lot of professionals wondering what LLMs look like when we picture the future of ecommerce.

LLM ecommerce use cases have multiplied fast, and most of the interesting ones show up around the search bar, where brands and retailers assist their customers:

- LLM-Based Precision – Leveraging a large language model for search optimization marks a significant evolution in product search by making torso and long-tail searches, like “men’s running shoes” or “women’s hiking boots size 8,” more precise and relevant. LLM-based precision broadens search coverage across both broad and highly specific queries, crucial for linking users with products they’re truly interested in, which highlights the immense value of understanding search intent.

- LLM-Based Search Recall – Integrating tools like synonyms, spell corrections, and relaxation rules with LLMs enhances search recall by identifying similarities and semantic meanings in queries, surpassing traditional text-match systems. This approach improves search results for nuanced needs, such as “running shoes good for knees,” by focusing on semantic intent rather than just keywords. As a result, you’ll deliver quality search results for every query.

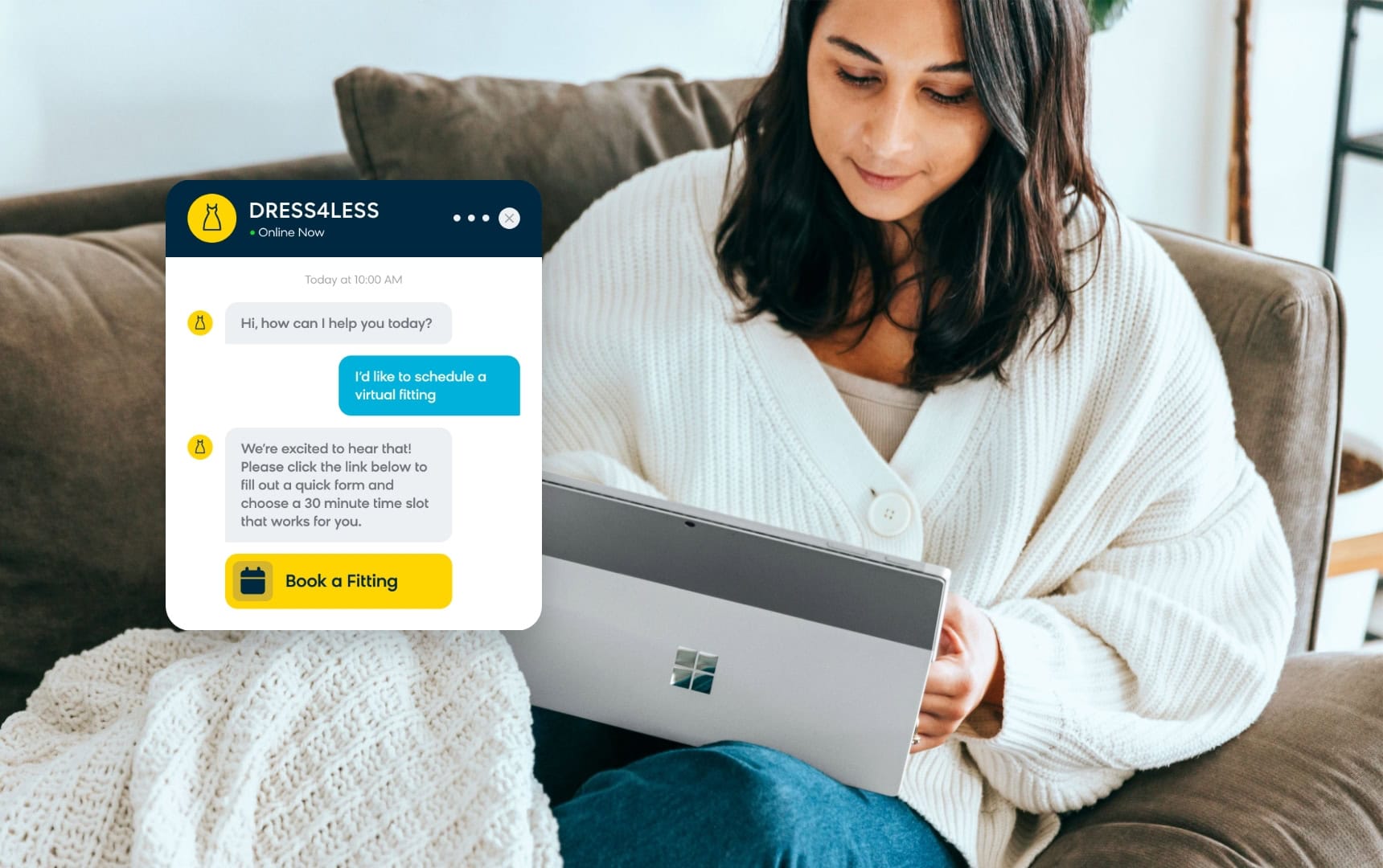

- Virtual Shopping Assistants – Powered by LLMs, vector technology, and personalization, virtual shopping assistants are set to transform online customer service into a conversational, intuitive experience that goes beyond chatbots. By understanding complex queries, connecting shoppers with suitable products through semantic recognition, and tailoring interactions based on customer data, conversational commerce merges the convenience of digital shopping with the personalized touch of in-store interactions.

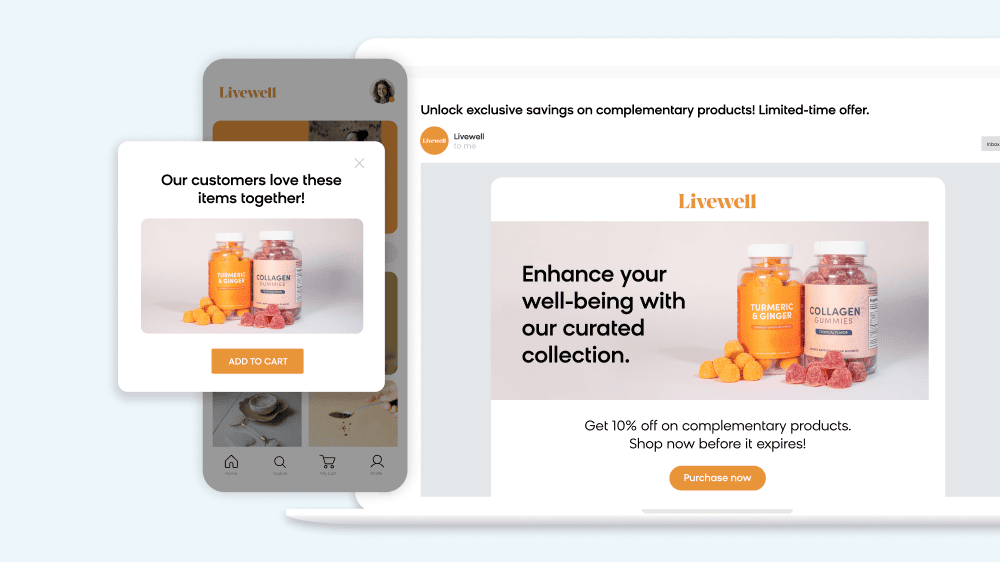

- Content Generation & Personalization – LLMs excel at creating personalized product descriptions, category pages, and recommendation explanations that speak directly to individual customer preferences. Instead of generic “customers who bought this also bought that” messaging, LLMs can craft contextual explanations like “perfect for your upcoming hiking trip based on your recent searches.” The conversational commerce market continues expanding as consumers expect more interactive, dialogue-driven shopping experiences that feel natural rather than transactional.

It all comes back to the seeker and unlocking the full potential of their customer journey using generative AI. Take, for instance, when somebody is looking to decorate their living room with neutral, earthy tones, but they don’t know how to verbalize this “want” into a search query. Although the search terms might be unknown to the seeker, an LLM can bring context to their particular need through a simple conversation.

We just have to think of AI as the digital version of the Industrial Revolution — language models will revolutionize our world and how we live. Nonetheless, we must balance the innovation and creativity inherent in language models with human ingenuity.

The Newest Frontier: Agentic AI

More recently, we’ve been seeing rapid developments in the world of agentic AI. In a nutshell, agentic AI features artificial intelligence that can take initiative and act with goal-oriented behavior. Instead of simply following a set of commands, AI agents can take the objective you set and make decisions on the best way to reach those goals. It can reason, learn, adapt, and optimize without the need for constant oversight or intervention.

This opens up a bevvy of opportunities for reshaping the shopping experience. For example, marketers can use agentic personalization to launch hyper-targeted campaigns in minutes or hours instead of weeks. According to eMarketer research, agentic AI will reshape how consumers discover and purchase products throughout 2026.

Agentic AI also helps power autonomous search, which can optimize search based on every interaction from a shopper. This allows brands to transform product discovery into a more conversational experience, which is what consumers are increasingly gravitating toward.

Look, for example, at Loomi Conversational Agent. It’s embedded directly in the website at key moments in a shopper’s journey (such as on product and checkout pages or within the search bar) and improves consumer confidence by answering questions, sharing expertise, and providing tailored recommendations. This level of personalization is only made possible through AI and LLMs, and it’s set to completely change the way people shop.

The theoretical promise of LLMs in ecommerce is compelling, but the real proof lies in how brands are actually implementing these technologies today and seeing measurable results.

Take TFG, South Africa’s largest fashion retailer with 4,800+ outlets across 37 brands. They ran a Black Friday A/B test of our conversational shopping agent on their lifestyle platform Bash to see whether LLM-driven shopping could actually move the numbers without feeling intrusive. The results were striking: a 35.2% increase in conversion rate, 39.8% higher revenue per visit, and a 28.1% reduction in exit rate. What unlocked those numbers was the agent’s ability to interpret open-ended questions and connect them to products, instead of forcing shoppers into a rigid keyword-match pattern. “What surprised us was how quickly the conversational agent developed and grew,” said Clynton McCalgan, Head of Digital at Bash. “It developed rapidly within a handful of months.”

Similarly, Torrid, the American women’s retailer serving sizes 10-30 with 400+ stores, applied Loomi AI to their site search experience. Semantic understanding means the search surfaces relevant products even when customers can’t perfectly articulate what they’re looking for, and each shopper sees results tuned to their behavior. The team got the project live in 6 weeks. “The level of confidence we have in showing our customer the product they are searching for has grown immensely,” notes Sumaira Khan, Ecommerce Program Manager at Torrid.

For flower delivery network Interflora, Loomi AI-powered search and merchandising drove a 221% revenue increase during Mother’s Day, a 17.5% lift in category revenue per visitor, and a 1.1 percentage point increase in search conversion rate across multiple markets and languages.For B2B applications, HD Supply’s implementation of AI-powered search delivered a 16% increase in revenue from search. Their add-to-cart rate from list and product detail pages went up 4%, and their team can now identify and resolve query problems in around 30 seconds using the Insights dashboard. Semantic understanding matters more in B2B precisely because the queries are messier: part numbers, compatibility constraints, and technical jargon that keyword-match search chokes on.

Overcoming LLM Implementation Challenges

Despite the promising results, implementing LLMs in ecommerce isn’t without obstacles. Understanding and addressing these challenges early can mean the difference between a successful rollout and a costly misstep.

Data quality stands as the foundation challenge. LLMs are only as good as the product information they’re trained on. Incomplete product descriptions, inconsistent categorization, and outdated inventory data will produce poor recommendations regardless of how sophisticated the underlying model is. Smart retailers start by auditing their product data completeness before implementing LLM features.

The hallucination problem, where LLMs generate plausible but incorrect information, poses particular risks in commerce. A conversational agent that confidently recommends a product that’s out of stock or misrepresents features can damage customer trust. The solution lies in grounding LLM responses in verified product data and implementing safeguards that prevent the model from extrapolating beyond known facts. A recent ACM study on LLM shopping experiences found that shoppers using grounded LLM-powered interfaces completed more tasks successfully and rated satisfaction higher than they did with traditional keyword search, which is the measurable upside once the hallucination problem is under control.

Cost considerations also weigh heavily on implementation decisions. Harvard Business Review research suggests that businesses need to balance the computational expense of running LLM queries against the expected increase in conversion rates and average order values. Many successful implementations start with focused use cases, like improving search for specific high-value product categories, rather than attempting site-wide deployment immediately.

Technical integration complexity varies significantly based on existing infrastructure. Retailers with modern, API-first commerce platforms typically see faster implementation timelines, while those with legacy systems may need to invest in middleware solutions or phased rollouts to avoid disrupting current operations.

Where LLM Ecommerce Goes Next

The next phase of LLM ecommerce adoption is already taking shape. Multimodal capabilities, where models process images, voice, and text together, will let customers search by photographing a product or speaking naturally instead of typing queries. Voice commerce will move past simple reordering and start handling the kind of discovery conversations that used to require a store associate.

The bigger shift is structural. LLMs are becoming the orchestration layer connecting every customer touchpoint, from discovery through post-purchase support. The brands investing now in grounded, well-instrumented LLM search and conversational agents are the ones setting the expectation everyone else will be measured against.As large language models continue to evolve, you’ll see a fundamental shift in what consumers expect out of their experiences with your brand. To see what a grounded conversational agent and LLM-driven search look like in practice, take a short tour of our platform.

FAQ: LLMs in Ecommerce

How much does it cost to implement LLM-powered search on an ecommerce site?

Implementation costs vary widely based on site complexity, product catalog size, and feature scope. Many platforms offer usage-based pricing models that scale with search volume, making it accessible for businesses of different sizes to start with pilot programs.

How long does it take to see results from LLM implementation?

Initial improvements in search relevance appear within weeks, as seen with Torrid’s six-week go-live. The full benefits of personalization and conversational features typically develop over 2-3 months as the system learns from customer interactions.

How long does it take to see results from LLM implementation?

Initial improvements in search relevance appear within weeks, as seen with Torrid’s six-week go-live. The full benefits of personalization and conversational features typically develop over 2-3 months as the system learns from customer interactions.

What data do I need to make LLMs effective for my ecommerce site?

Clean product data forms the foundation: detailed descriptions, accurate categorization, current pricing, and inventory status. Customer behavioral data enhances personalization, and search query logs help identify common pain points to address first.

Can LLMs work with my existing search provider?

Many LLM solutions integrate with existing search infrastructure rather than requiring complete replacement. The key is ensuring your current system can accept and process semantic search inputs alongside traditional keyword queries.

How do I prevent LLM hallucinations from affecting customer experience?

Ground all LLM responses in verified product data, set confidence thresholds for recommendations, and maintain human oversight for complex queries. Many successful implementations use LLMs to enhance rather than replace existing search logic.

What metrics should I track to measure LLM search success?

Focus on search conversion rate, query success rate (finding relevant results), time to purchase, and customer satisfaction scores. Compare these against your previous search performance to quantify improvement.

Are LLMs suitable for B2B ecommerce as well as B2C?

Yes. B2B implementations often see strong results because business buyers frequently search using complex, technical queries that benefit from semantic understanding. HD Supply’s 16% revenue increase demonstrates B2B applicability.

How do LLMs handle multiple languages for global ecommerce sites?

Modern LLMs support multilingual capabilities, though performance varies by language. Interflora’s success across multiple markets shows that LLM-powered search can scale internationally with proper configuration.