As a digital merchandiser or marketer, you’ve probably heard the words “let’s test it” more times than you can count. Even as the amount of data available to drive decision-making has exponentially exploded in the past several years, many teams find themselves waking up one day and realizing that their learnings are siloed, tactical, and short-lived.

Learnings Are Siloed

Functionally, A/B testing usually takes place within a single channel, though nowadays, a website banner test, a paid social test, and a paid search test may all be taking place for a single campaign or promotion. However, all too often, the social team leaves with a social campaign learning while the website team leaves with a site banner learning. They don’t necessarily share learnings with each other, or look for overlapping takeaways that, when converged, point to a meaningful, deeper truth.

Learnings Are Tactical

Running over 150 tests on CTAs, subject lines, image types, etc. can be effective, but it won’t leave you with much proprietary information about your customer, your products, or your vertical. This is often why big checks are written to firms specializing in customer research: Third-party research is the antidote to the affliction of lacking strategic learnings.

Learnings Are Short-lived

Tactical best practices frequently live and die by the digital minute, whereas human truths about the fundamental ways in which a category is shopped, for instance, can last a lifetime. This means that the return on learning is often incremental and not exponential.

So the questions is, how can we make learning connected, meaningful, and long-lasting?

Set Your Learning Sights High(er)

The reason so many learnings are tactical is because there is no higher, significant purpose for testing ever identified in the first place. The obsession over the almighty altar of conversion rate has taken center stage, so don’t be alarmed if you’re reading this and realizing that conversion rate is the “sun” of your testing universe.

What are your organization’s biggest strategic desired learnings? Think of the big, “hairy” questions that are not easily answered with a survey or a simple A/B test. What are the learnings that, if realized and leveraged, would help move a massive needle like market share? These desired learnings should be front and center as you create your learning agenda. Some examples could include:

-

Which attributes of our top categories draw shoppers in, and which “close the deal”?

-

What kind of website messaging should our brand voice adopt to be most compatible with our customers?

-

What are the best shopping tools we can provide to improve our experience?

-

What kinds of content can we provide to best guide potential customers to purchase? What kinds of content inspire them to shop?

-

What is the best frequency for engaging our customers?

Notice how these questions are not easily answered with a single test. That’s what makes them good candidates for learning agenda questions.

Form a Learning Team and Create a Centralized Learning Agenda

Create a lean, agile team to lead test-and-learn strategy, ensuring you have representation from all paid, owned, and earned channels. Having enough breadth of representation ensures better test-and-learns, and drives connectivity between their teams. Make sure you have senior leadership representation, like an engaged executive sponsor. They can help champion the “learning pod” and its work within a shared Excel/Google Sheet file (your learning agenda).

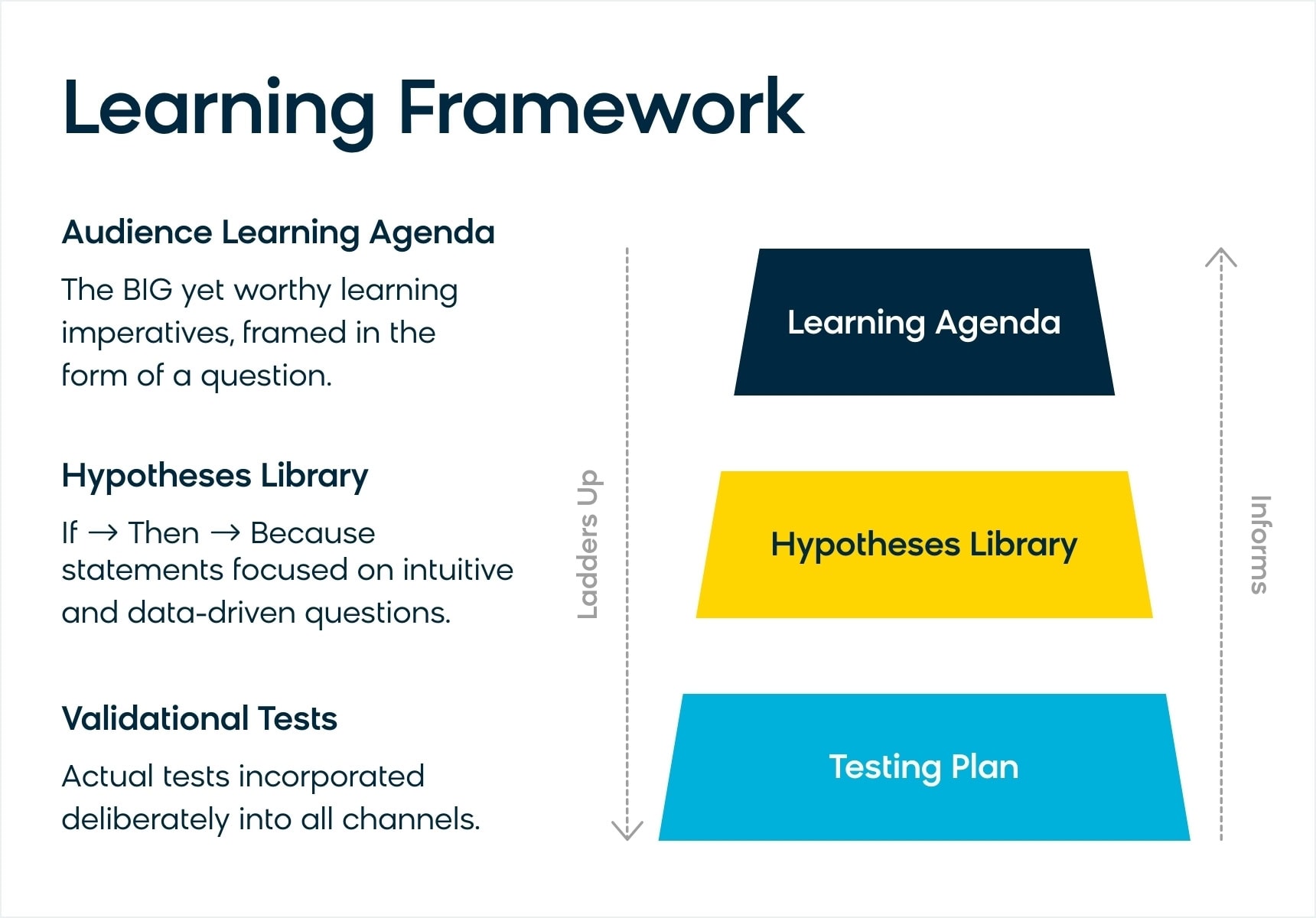

This centralized document acts as a repository for your learning agenda questions, and every single hypothesis should ladder up to this document in some way. Note that these don’t include more tactical tests, such as testing boost/bury rules in your product grids or CTA tests within email campaigns.

See the visual below to understand the relationship between elements in our recommended test-and-learn approach:

In your shared document, ideate hypotheses to test that ladder up to your learning agenda questions. Merchandisers can get a head start by checking out this quick read from Roxy Couse, “Testing Into Your Merchandising Strategy”.

Enforce “Ladder-up” Discipline in the Field

It’s important to resist the urge to cast a wide net and document a hundred hypotheses and test ideas. That’s where discipline is needed to avoid ending up with a plethora of disconnected, tactical learnings that won’t last long.

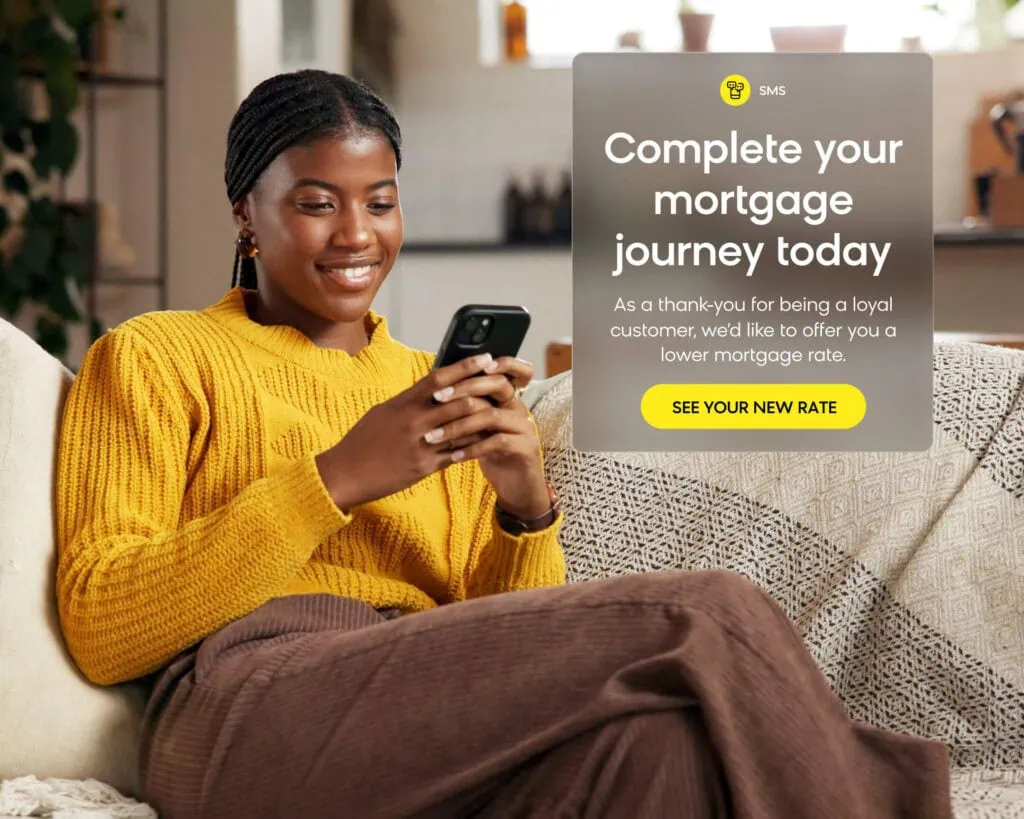

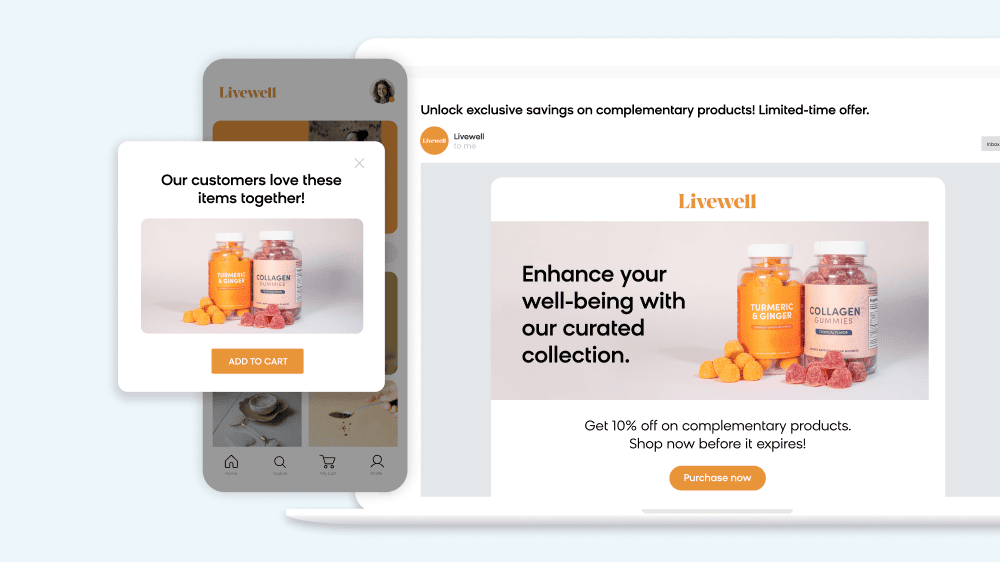

Where possible, try to ideate 5-10 tests, ideally across multiple channels, that ultimately answer one of your higher level learning agenda questions. Why? Because the systematic convergence of evidence between tests — what you see in an SEM test that concurs with an email test that also concurs with a website test — is very likely to be true.

Repeat this process enough, and you’ll slowly but surely reach consensus on “truth-points” surrounding your big, hairy questions. Not only will all your tests be geared toward performance optimization (which, if you think about it, 99% of tests are already), but all your sweat, tears, and dollars will finally begin delivering learnings that meaningfully unlock lasting growth.

Make These Principles Your Learning Team’s Motto

-

If we test everything, we won’t learn anything meaningful

-

To maximize our impact, at least 80% of all of our testing should ladder up to larger learning agenda questions

-

When it comes to managing learning, keep the group small but cross-functional

-

Learning is a journey that mustn’t be short-sighted — we will not give in to lesser, expedient tests that make life easier today; we will focus on what can inform our future

-

Never, ever just test to test. Know when to A/B test and when to make a decision (measuring pre/post later)